GPU-accelerated Neural Networks in JavaScript

According to the Octoverse 2017 report, JavaScript is the most popular language on Github. Measured by the number of pull requests, the level of JavaScript activity is comparable to that of Python, Java and Go combined.

JavaScript has conquered the Web and made inroads on the server, mobile phones, the desktop and other platforms.

Meanwhile, the use of GPU acceleration has expanded well beyond computer graphics and is now an integral part of machine learning.

Training neural networks with deep architectures is a computationally intensive process that has led to state-of-the-art results across many important domains of machine intelligence.

This article looks at the ongoing convergence of these trends and provides an overview of the projects that are bringing GPU-accelerated neural networks to the JavaScript world.

An overview

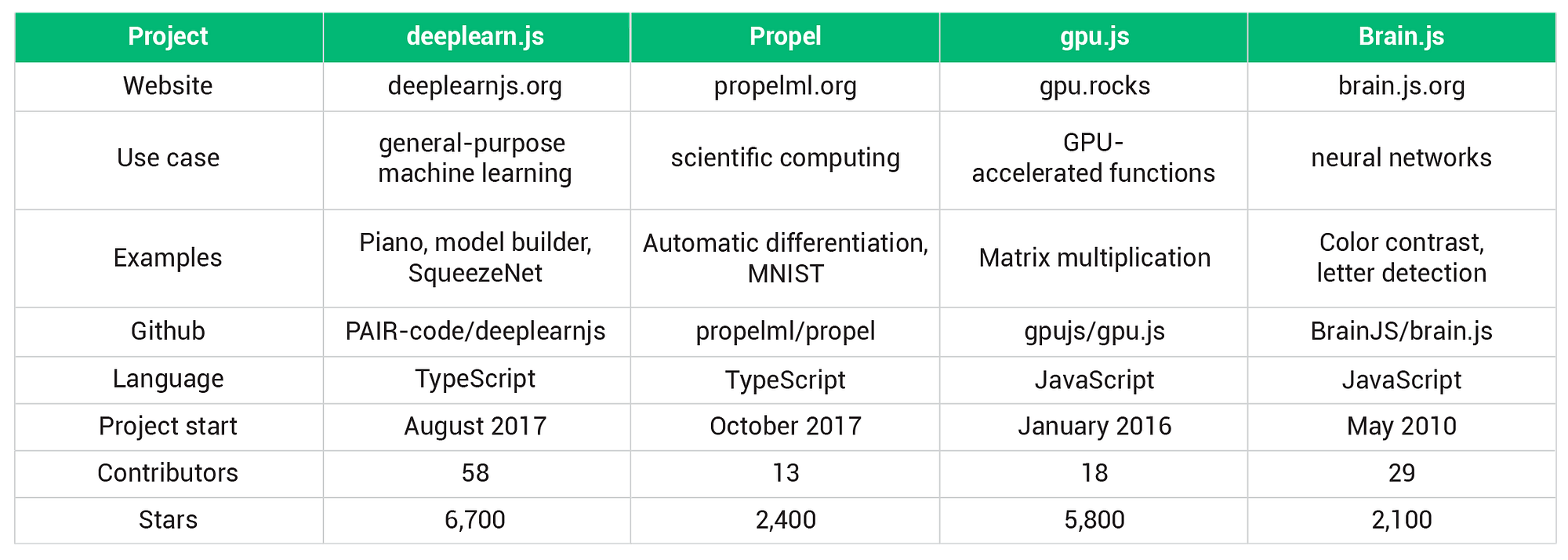

All projects listed below are actively maintained, have thousands of stars on Github and are distributed through NPM or CDNs.

They all implement GPU acceleration in the browser through WebGL and fall back to the CPU if a suitable graphics card is not present.

Libraries that are designed to run existing models (especially those trained with Python frameworks) are not included in this overview.

In the end, four projects have made it onto the list.

While its feature set is geared towards neural networks, deeplearn.js can be described as a general-purpose machine learning framework. Propel is a library for scientific computing that offers automatic differentiation. Gpu.js provides a convenient way to run JavaScript functions on the GPU. Brain.js is a continuation of an older neural network library and uses gpu.js for hardware acceleration.

I intend to maintain this article and expand it into a Github repository. Please let me know when you come across relevant news.

Deeplearn.js

Deeplearn.js is the most popular project among the four and described as a “hardware-accelerated JavaScript library for machine intelligence”. It is supported by the Google Brain team and a community of more than 50 contributors. The two main authors are Daniel Smilkov and Nikhil Thorat.

Written in TypeScript and modeled after Tensorflow, deeplearn.js supports a growing subset of the features provided in Google Brain’s flagship open-source project. The API essentially has three parts.

The first part covers functions used to create, initialize and transform tensors, the array-like structures that hold the data.

The next part of the API provides the operations that are performed on tensors. This includes basic mathematical operations, reduction, normalization and convolution. Support for recurrent neural networks is rudimentary at this point, but does include stacks of Long Short Term Memory Network cells.

The third part revolves around model training. All of the popular optimizers, from stochastic gradient descent to Adam, are included. Cross entropy loss, on the other hand, is the only loss function that is mentioned in the reference.

The remainder of the API is used to set up the environment and manage resources.

Experimental GPU acceleration in node.js can be achieved through headless-gl (see issue #49).

The project website has a number of memorable demos. These include piano performances by a recurrent neural network, a visual interface to build models and a webcam application based on a SqueezeNet (an image classifier with a relatively small number of parameters).

Propel

Propel is described as “differentiable programming for JavaScript”. The work of the two main authors, Ryan Dahl and Bert Belder, is complemented by eleven contributors.

Automatic differentiation (AD) is at the core of this project and frees us from the need to manually specify derivatives. For a given function f(x) defined with the supported tensor operations, the gradient function can be obtained using grad. The multi-variable case is covered by multigrad.

Beyond AD, it does not seem entirely clear where the project is heading. While a “numpy-like infrastructure” is mentioned as a goal on the website, the API is under “heavy development” and includes functionality associated with neural networks and computer vision. Using the load function, the content of npy files can be parsed and used as tensors.

In a browser environment, Propel makes use of the WebGL capabilities in deeplearn.js. For GPU acceleration in Node, the project uses TensorFlow’s C API.

gpu.js

While most of my experience is with CUDA rather than WebGL, I can attest to the time-consuming nature of GPU programming. I was therefore pleasantly surprised when I came across gpu.js. With around 5,700 stars on Github, the project is comparable to deeplearn.js in terms of its popularity and has 18 contributors. Several individuals have made substantial contributions over time. Robert Plummer is the main author.

A kernel, in the current context, is a function that is executed on the GPU rather than the CPU. With gpu.js, kernels can be written in a subset of JavaScript. The code is then compiled and run on the GPU. Node.JS supportthrough OpenCL has been added a few weeks ago.

Numbers and arrays of numbers with up to three dimensions are used as input and output. In addition to basic mathematical operations, gpu.js supports local variables, loops and if/else statements.

To enable code reuse and allow for a more modular design, custom functionscan be registered and then used from within the kernel code.

Within the JavaScript definition of a kernel, the this object provides the thread identifiers and holds values that are constant inside the actual kernel but dynamic on the outside.

The project specializes in accelerated JavaScript functions and does not attempt to provide a neural network framework. For that, we can turn to a library that depends on gpu.js.

Brain.js

Brain.js is the successor to harthur/brain, a repository with a history dating back to the ancient times of 2010.

In total, close to 30 individuals have contributed to these two repositories.

Support for GPU-accelerated neural nets is based on gpu.js and has, arguably, been the most important development in the project’s recent history.

In addition to feed-forward networks, Brain.js includes implementations of three important types of recurrent neural networks: classic Elman networks, Long-short Term Memory Networks and the more recent networks with Gated Recurrent Units.

The demos included in the repository are at an early stage. A neural network learning color contrast preferences is shown on the homepage. Two other demos, one involving the detection of characters drawn with ASCII symbols, can be found in the source code.

The advent of accelerated JavaScript libraries for machine learning has several interesting implications.

Online courses can integrate exercises related to machine learning or GPU computing directly into the web application. Students do not necessarily have to set up separate development environments across different operating systems and software versions.

Many demos based on neural networks can be deployed more easily and no longer require server-side APIs.

JavaScript developers interested in machine learning can make full use of their specialized skills and spend less time on integration issues.

Furthermore, computational resources available on the client side might be employed more efficiently. After all, not all graphics cards are utilized for virtual reality and cryptocurrency mining all the time.

To be clear, I do not advocate the use of the libraries mentioned in this article for mission-critical neural networks at this point. The Python ecosystem continues to be the obvious first choice for most applications.

It is, however, encouraging to see the progress that has been made over the last twelve months. Neither deeplearn.js nor Propel existed a year ago. Activity levels in the gpu.js repository were relatively low and Brain.js did not support GPU acceleration.

Over time, these projects will compete with the established frameworks in some regards and enable entirely new applications that JavaScript is uniquely suited for.

留言

張貼留言